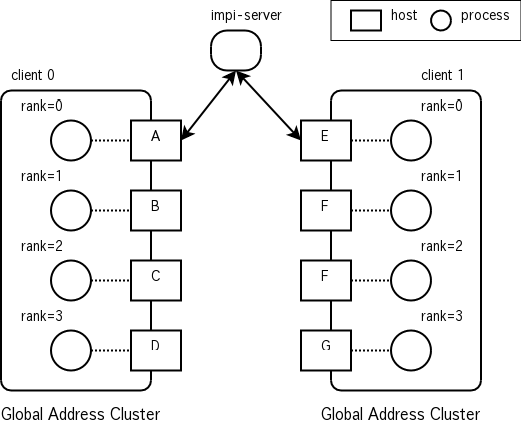

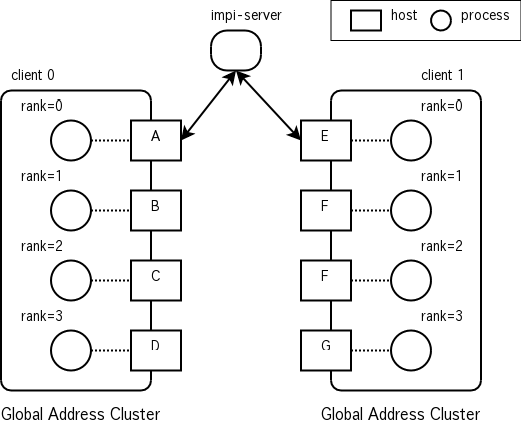

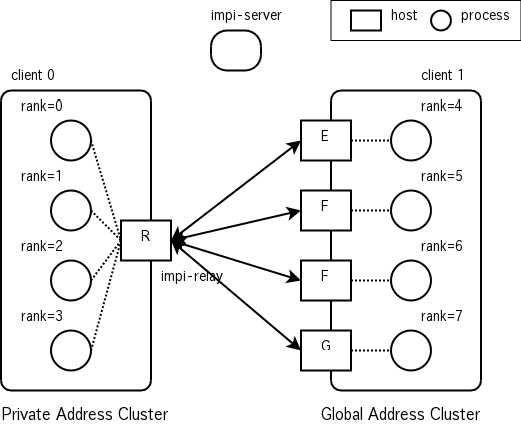

Figure 1. Initialization (w/o IMPI relay)

The following terms are used in this document (and others), which are based on the terms defined in the IMPI specification.

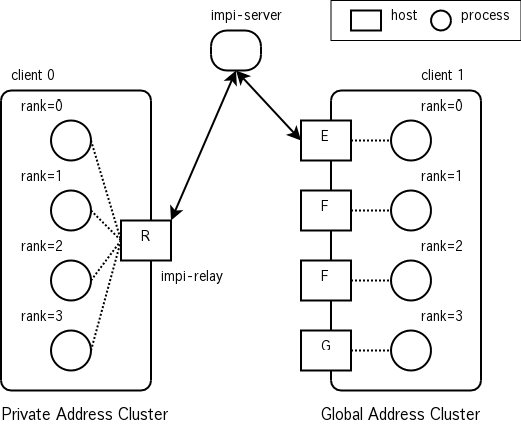

IMPI Relay is a forwarding mechanism transparently by IMPI protocol, to bridge nodes in a cluster private address to global address. Relay be run on a node with both a private address and a global address. It forwards only data in the IMPI protocol, and it is not a general mechanism such as NAT (Network Address Translation).

Its work is transparent to the IMPI clients when using a single Relay, and any implementation of the IMPI protocol should work with Relay. Sharing traffic by multiple Relay needs distributing traffic and it makes discrepancy to the IMPI protocol and needs slight modifications to the IMPI implementation.

Without IMPI Relay, one process is paired with one host (one-to-one mapping). On the other hand, IMPI Relay acts as a host, and all processes in the cluster are dangling it (one-to-many mapping).

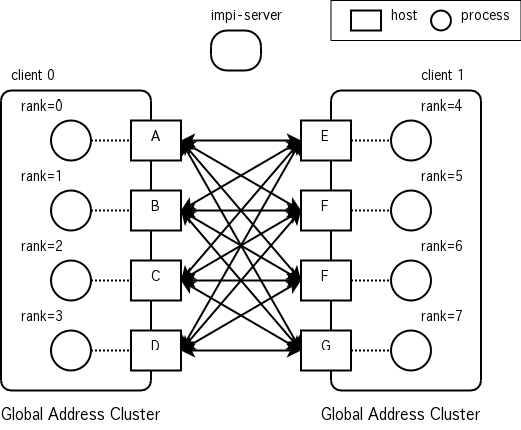

IMPI Relay does two kind of works. The first one is relaying IMPI command messages between the IMPI Server and the Agent. The second one is relaying data messages between hosts in each cluster. In the first half of the initialization phase, a client which has the smallest rank number becomes a Agent of each cluster. The Agent communicates the IMPI Server to gather and distribute the client information, as shown in Figure 1 and 2. In the second half of the initialization phase, each host establishes all-to-all connections, and rank numbers are renumbered, as shown in Figure 3 and 4. And then, MPI messages are sent and received over those connections.

IMPI Relay forwards communications between the IMPI Server and the Agent in the private address cluster.

IMPI Relay forwards communications between the private address cluster and the external cluster.

See Structure of GridMPI Execution on a normal GridMPI execution.

The IMPI Relay is a proxy process of the IMPI protocol which is used for inter-cluster communication. MPI processes on client1 communicate with IMPI server and MPI processes on client0 via IMPI Relay. In this case, IMPI Relay provides a view as one host have two processes (rank2, rank3) to client0; it also provides a view as one host have two processes (rank0, rank1) to the client1.

IMPI Protocol

+---------+========================+

| | |

+-----|---------|-----+ +----------|----------+ +--------+

| | | | | +-------+ | | impi- |

| | | | | | relay | | | server |

| | | | | +-------+ | +--------+

| | | | | / \ |

| +-------+ +-------+ | | +-------+ +-------+ |

| | rank0 | | rank1 | | | | rank2 | | rank3 | |

| +-------+ +-------+ | | +-------+ +-------+ |

| | | | | | | |

| +=========+ | | +=========+ |

| YAMPI Protocol | | YAMPI Protocol |

+---------------------+ +---------------------+

mpirun -client 0 mpirun -client 1

|